In recent months, a subtle but consequential shift has begun to take hold inside some of the world’s most advanced technology organizations. Engineers are no longer just measured by output or efficiency. Increasingly, they are being judged by something far less visible to the outside world: how much AI they use.

The metric is not hours worked or features shipped. It is tokens—the units of computation consumed when interacting with large AI models.

The trend has acquired a name: “tokenmaxxing.” And while it may sound like an internal cultural quirk, it points to a much larger transformation in how enterprises will experience—and pay for—AI.

From Productivity Tool to Economic Signal

According to reporting highlighted in The New York Times, engineers at leading AI companies such as Meta and OpenAI have begun competing on internal leaderboards that track token usage. The more tokens consumed, the stronger the signal of productivity, experimentation, and technical fluency.

In parallel, leaders across the industry are reinforcing this behavior. At a recent conference, Nvidia CEO Jensen Huang suggested that companies should expect top engineers to consume significant amounts of AI compute—potentially hundreds of thousands of dollars annually in token usage—as a natural extension of their work.

What was once an invisible backend metric is rapidly becoming:

- A proxy for output

- A signal of ambition

- And, increasingly, a line item in corporate budgets

When Compute Becomes Compensation

This shift is not limited to internal metrics. It is beginning to reshape how companies think about talent itself.

As explored by TechCrunch, some firms are now considering token budgets as part of compensation packages, effectively treating compute as a fourth pillar alongside salary, bonus, and equity.

The logic is straightforward:

If AI amplifies human productivity, then access to more compute directly increases an employee’s value.

But this raises an uncomfortable question for enterprise leaders:

👉 If token spend per employee approaches—or exceeds—their salary, what exactly are you optimizing for?

The Cost That Doesn’t Show Up Where You Expect

For CIOs and CFOs, the more immediate concern is not cultural—it is financial.

Unlike traditional IT costs, token consumption:

- Is usage-based, not fixed

- Scales with behavior and iteration, not just workload

- And is often embedded inside tools, vendors, and workflows

As a result, organizations may experience rising AI spend without a corresponding increase in visible budgets.

Executives are already starting to take notice. Aaron Levie, CEO of Box, recently warned that companies will need to rethink how they budget for AI, as token usage expands beyond engineering into functions like legal, sales, and operations.

What begins as a developer productivity tool quickly becomes:

👉 An enterprise-wide consumption layer

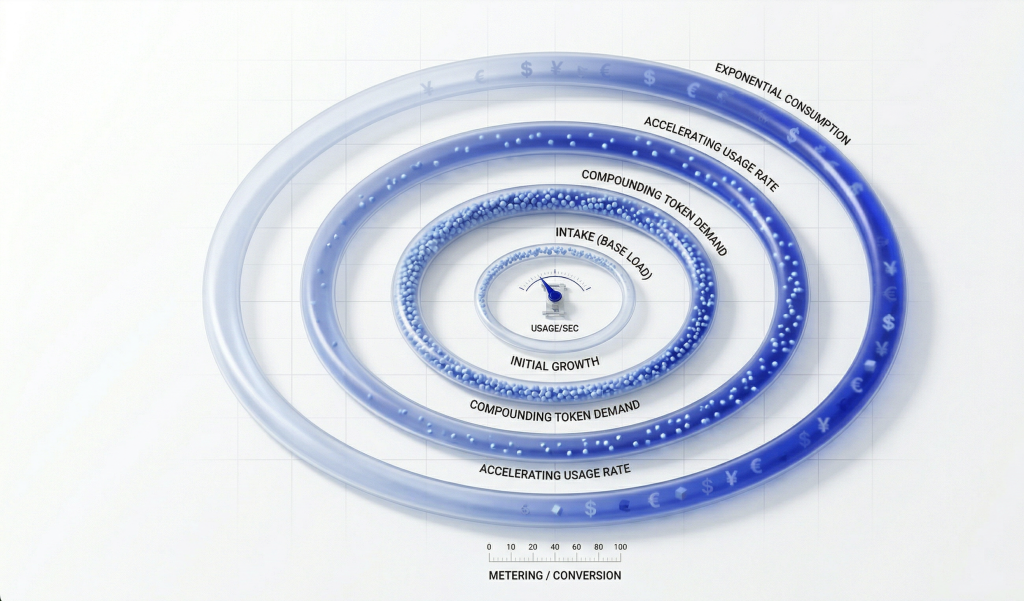

The Agent Effect: From Usage to Accumulation

If tokenmaxxing reflects human behavior, AI agents represent its amplification.

Unlike humans, agents:

- Operate continuously

- Execute tasks in loops

- Chain multiple model interactions

This creates a new dynamic: persistent consumption.

As Huang noted, the future of computing will not be idle machines waiting for input, but systems running continuously—generating tokens as agents work autonomously in the background.

In this environment, cost is no longer tied to discrete actions. It becomes:

👉 An ongoing, compounding function of system design

A Familiar Pattern—With Higher Stakes

There is a historical parallel here.

In the early days of cloud computing, organizations initially focused on access and scalability. Only later did they confront the realities of unit economics—cost per compute, per workload, per transaction.

AI is following a similar trajectory, but with one critical difference:

👉 The cost driver is not just infrastructure. It is cognition itself.

Tokens represent the cost of processing language, context, and reasoning. They are, in effect, the price of machine “thought.”

And like cloud before it, this cost is easy to ignore—until it isn’t.

What This Means for Enterprise Leaders

The emergence of token economics signals a broader shift:

- AI is moving from tool to infrastructure

- Costs are moving from fixed to variable

- And value is moving from activity to outcomes

Organizations that continue to measure success by usage—more prompts, more agents, more automation—may find themselves optimizing for the wrong variable.

Because in a token-based economy:

👉 More AI does not necessarily mean better economics

The Question That Matters Now

The most important question for CIOs is no longer whether their organization is adopting AI.

It is:

👉 Do we understand what each AI-driven outcome actually costs?

Until that question is answered with clarity, token consumption will remain what it is today:

A powerful engine of productivity—

and an equally powerful source of hidden spend.

References:

- Reporting on “tokenmaxxing” and internal usage dynamics from The New York Times

- Industry perspectives on token budgets and compensation from TechCrunch

- Executive commentary on rising token costs and enterprise budgeting from Business Insider